Golden Guardians v Team SoloMid (LCS 2021-01-16) - Recap

So, let’s cut to the chase: our model suggested betting on Golden Guardians, quite substantially in fact, and they lost. Does that mean we were wrong, though? That’s actually a somewhat nuanced question, and we’ll attempt to answer it here.

Broadly speaking, our model gives an estimate of the probability that each teams wins a match. It does not (and can not) guarantee who will win. Even if we believed that team A had a 99% chance to win, and yet they lost, it wouldn’t necessarily mean we were wrong - the only way to test that would be to replay that same exact match many many times (the more the better), and see the results. If team A indeed won around 99% of these hypothetical matches, then it would mean our model accurately predicted their odds, and it just so happened that reality was the one-in-a-hundred fluke event.

Since we can’t actually simulate these matches to see what would happen with enough attempts, this sort of analysis is a bit trickier. Look out for a future article going into much more detail on this concept, and how we can validate our results anyway! For now, however, let’s just focus on this game and see what we can glean from it.

Our Prediction

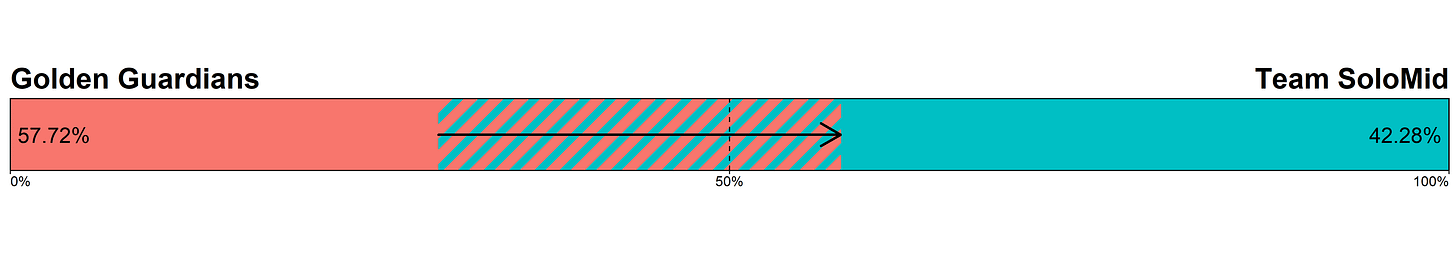

Here was our prediction for the game, at the time:

We believed Golden Guardians to not only be undervalued by the market, we actually believed them to be favored. The difference between our estimate of their win probability and the market’s implied probability were large enough to suggest a fairly sizable bet:

|metric |Golden Guardians |Team SoloMid |

|:--------------------------|:----------------|:------------|

|Market Odds |3.14 (+214) |1.33 (-303) |

|Implied Win Probability |31.85% |75.19% |

|Calculated Win Probability |57.72% |42.28% |

|Expected Value |0.8124 |-0.4377 |

|Bet Size |$379.63 |$0 |We’ve talked about this before, but it’s worth reiterating: we are not betting on GG because they are the favorite; we are betting on GG because that bet has positive EV! Even if our model put them at 40% to win, we would still be betting on them, because that is higher than the market believes their chance to win is. The “reward” (odds) that the market pays out is relative to that risk, so a mismatch means that we are getting disproportionately rewarded for the actual risk we take on. Keep an eye out for a future article on the concept of EV betting; for now, it’s safe to simplify it as this: if we believe a team has a higher chance to win than the market does, we should bet on it. The bigger the difference, the more money we should be betting.

A note on market odds: as we’ve talked about before, they don’t add up to 100% due to the vig, which we’ll talk about in more detail in a future article. We still have to beat these odds to make a profit, but to get a sense of the market’s actual estimation we can renormalize the two probabilities so that they do add up to 100%, which gives us:

GG (29.76%) v TSM (70.24%)

The Outcome

So, Golden Guardians lost. Is that implausible? Even according to our model, that still had a 42.28% chance of occurring, which is really not too far off from a coin flip. As mentioned earlier, if we could play this exact game back hundreds of times, and GG won 58% of the time, we would be satisfied in our model, and importantly we would have won a LOT of money.

Let’s do a quick math exercise: assuming our model was correct, if this matchup occurred 100 times, our bet would pay off 58 times, and would lose 42 times. Each winning bet would pay out 2.14 * $379.63 = $812.41, which each losing bet would just lose the $379.63 bet. In total, then, we would earn $812.41 * 58 = $47,119.78 and lose $379.63 * 42 = $15,944.46, for a net profit of $31,175.32. Since this is across 100 games, our expected value - that is, the average winnings per game - would be $311.75.

So, on average, we expect to make $311.75 on this bet - seems like a pretty great deal. Unfortunately, we can’t actually place this bet 100 times, which is why our variance is higher (pending the aforementioned article on EV betting, you can just think of this as how consistent your returns actually are). The best we can do is try to place as many bets on as many games as we can, so that we have more shots at the target.

All of this, of course, depends on one very important assumption: that our model is correct. Given that we can’t actually simulate this game as many times as we want, how do we decide who was more correct in their predictions: our model, or the market?

The Game

At first glance, it would appear that the market was more accurate - it had TSM pretty heavily favored, and TSM did in fact win. However, anyone who watched this game would probably have told you that it felt pretty even up until the very end. This is where the analysis becomes a bit more qualitative; there isn’t a clean way to assign odds based on how a game felt, so we’re left with looking at the course of the game and basically just speculating on what odds feel more correct for what we’re witnessing.

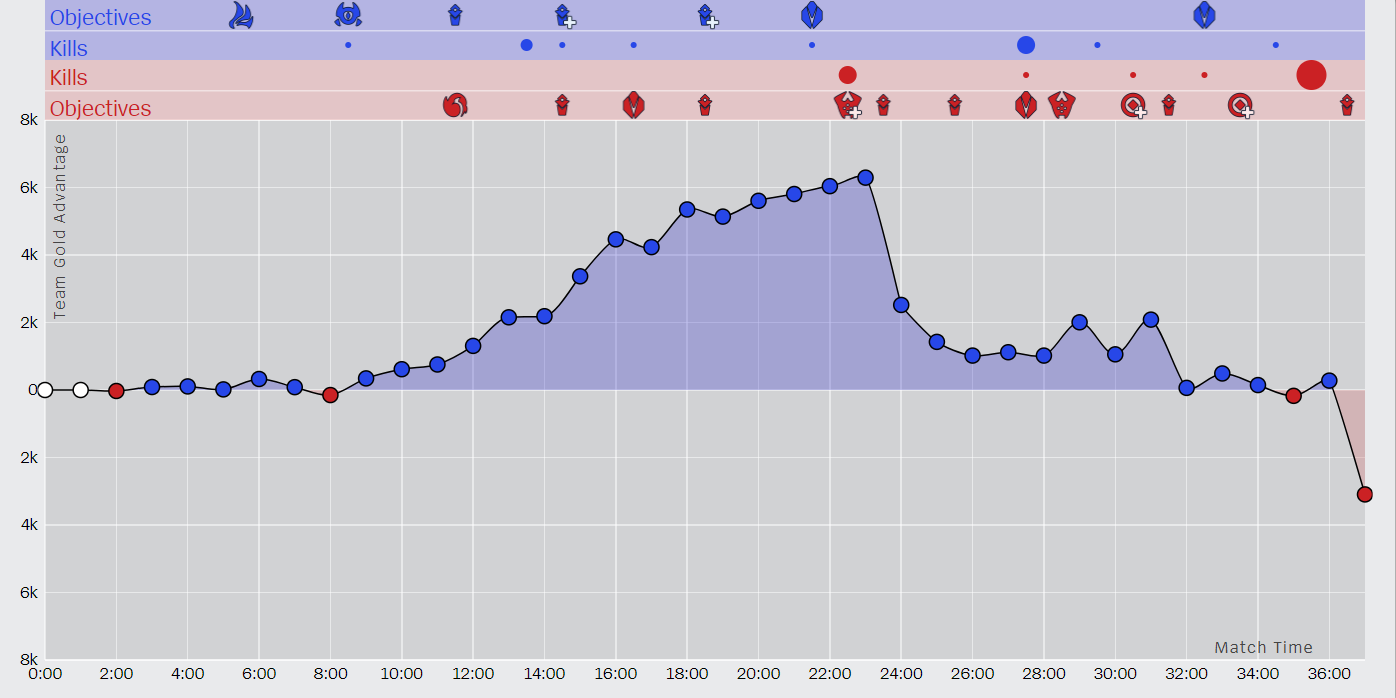

Well, here’s the course of the game, plotted out:

(GG is in blue, TSM in red)

For the first 10 minutes, the game was remarkably even - GG picked up a dragon and a kill, but gold was almost dead flat between the two teams.

For the next 10 minutes, GG accrued a steady advantage, peaking at roughly a 6k gold lead, and getting an additional 5 kills as well as 5 towers to TSM’s 2.

At this point, does this look like a 30/70 matchup? GG has certainly at least held their own, if not dominated, and their prospects are looking very good.

GG then goes for Baron Nashor, resulting in a catastrophic Baron steal by TSM which also spirals into three kills. Momentum swings in TSM’s favor, but even now the game is still even. Gold still favors GG slightly, and outside of Baron and a couple of towers, no major objectives are lost. Perhaps GG thinks they’re on the back foot, or their confidence is shaken, because they shy away from large confrontations, and TSM is able to destroy a couple of inhibitors pretty much for free.

Then, on the back of a kill, GG decides to try for Baron again in full view of TSM. Their coordination falters, and TSM cleans house, not losing a single champion for their ace. This, obviously, ends the game.

The Takeaway

That was a lot of words to say that we still lost the bet. Well, we may have lost the bet, but we would still argue that we weren’t wrong about our prediction. If we could place this same bet again, we would. Looking at the game itself, GG was steadily eking out advantage, until a single bad team fight put them on the back foot, which led to another desperation play that sealed their fate. If this game were repeated, it seems unlikely for GG to throw this hard again, and it certainly didn’t seem as though TSM were always in control of the game. The game definitely felt relatively even, and not 30/70 favored towards TSM, and if it was replayed a hundred times it seems fair to say that GG would win more than 30 of them.

The other takeaway, of course, is that Golden Guardians should probably spend some time thinking about their Baron approach.

For more in-depth match analysis, predictions, and quantitative betting strategy tips, subscribe to stay in the loop. You can also follow us on Twitter (@AlacrityBet) or Instagram (@alacritybetting) for quick prediction snapshots of upcoming matches. Alacrity.GG is your fastest path from simply gambling to beating the market.